The Video Game Crash of 1983

Some of my oldest childhood memories revolve around my Playstation 2. I was addicted to it. I wish there was a way for me to figure out how many hours I spent playing Lego Star Wars or Star Wars Battlefront 2, but my best guess would be that it's in the hundreds, perhaps thousands. I remember my mom instituting a 30 minute time limit whenever I played, and placing a timer next to me. Though, I always used to cheat and add time to it when she wasn't looking. As I got older and made more friends in school, I learned quite a few kids had an Xbox 360, and I used to beg to play Halo whenever I was at one of their houses. Soon I struck a deal with my father to get me an Xbox in exchange for me working off the cost. I mowed lawns for several summers following, and clocked an absurd amount of hours into Halo Reach.

It was all my friends and I did for years and years. We bonded over Xbox and stayed connected after school. It gave us something to discuss in class, and I definitely remember watching Call of Duty Zombies lore videos at lunch. It's hard to imagine gaming as the cultural giant it is without consoles. Yet, just a few decades before this, the home console market had imploded in what became known as the Video Game Crash of 1983.

Now, I'm going to discuss the lead up to this event, but it involves bringing up a particular man. So, consider this a Ronald Reagan trigger warning for all of you Reagan haters out there.

The Consequences of Reagan

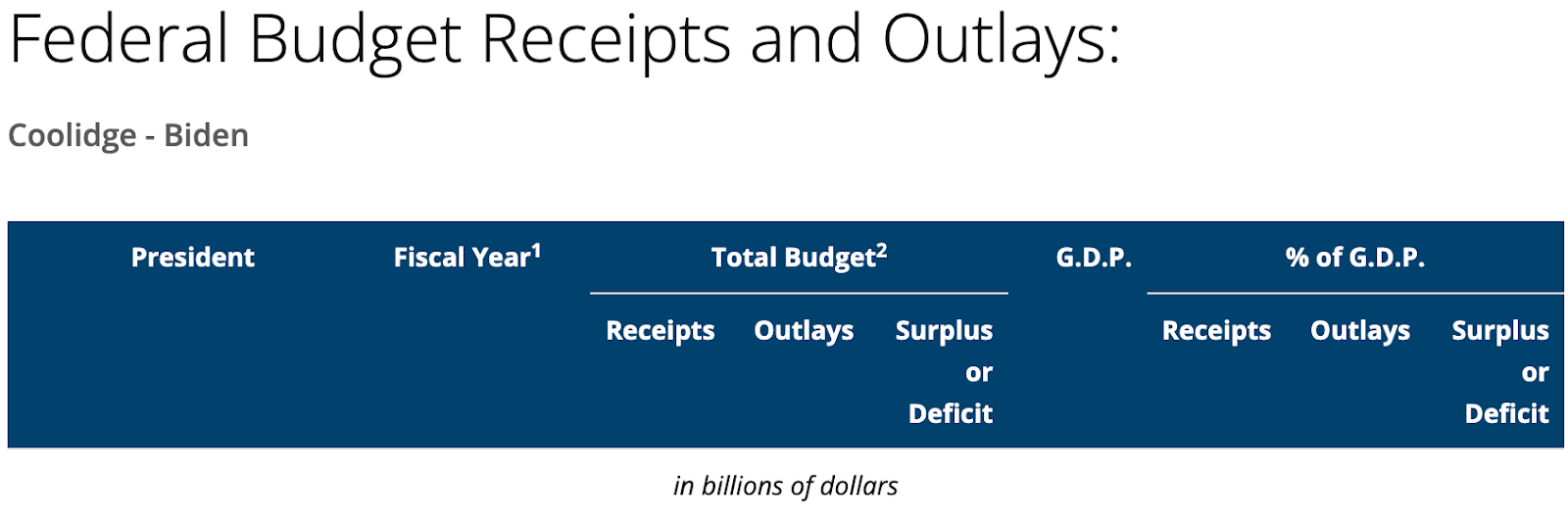

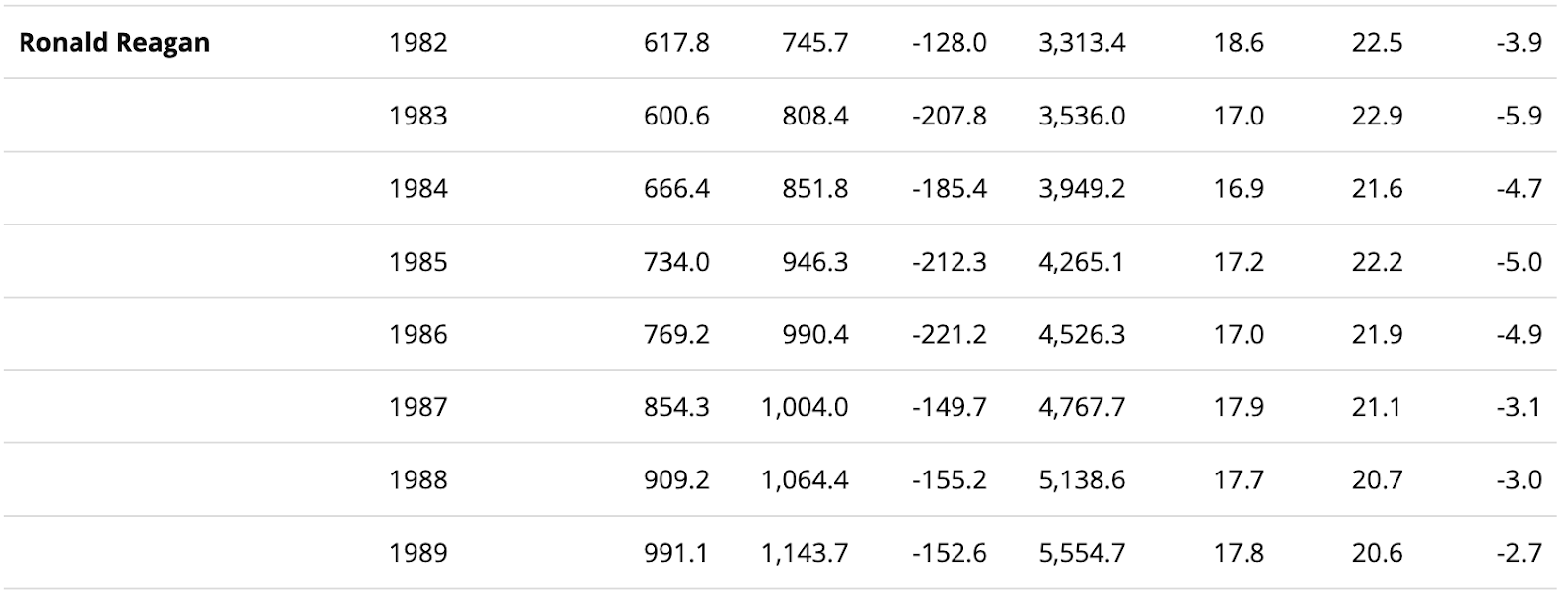

In 1980, Reagan was unfortunately elected as the 40th president of the United States. What also began that year was a recession. The 1970s saw two energy crises and deregulation from the Carter Administration that caused record high post-World War 2 inflation and interest rates. Needless to say, Reagan inherited a struggling economy. To combat this, he pushed supply side economics, or Reaganomics as it became more well known. The idea was to cut taxes but also encourage an increase in the production of goods. So, while the tax percentage is lower, the amount of goods taxed would be larger. People would supposedly retain more income, particularly the very wealthy, to spend on these goods and tax revenue would increase. The Economic Recovery Tax Act of 1981 was the first implementation of such an idea, slashing income taxes across the board. However, he ended up raising taxes again in 1982 (and in 1983, 1985, and 1987) and federal spending increased throughout his presidency from the billions to the trillions. Despite all of his promises, the budget deficit increased.

A lot of companies began increasing their output during this period, including Video Game companies. It was a booming industry, worth about $3.2 billion by 1983. Atari was at the forefront of the home console market for a time, but many more companies were churning out consoles of their own. In the years leading up to the crash, Magnavox, Mattel, Atari, Coleco, and General Consumer Electronics had all released home consoles. With the market expanding, the flow of money began to spread out, thinning profit margins.

In 1976, Atari had been purchased by Warner Communications which brought a lot of changes as to how the company was managed, mainly in the treatment of workers. Developers weren't given credit for their work, and denied bonus pay, despite promises from Warner stating the contrary. A memo even circulated listing the most successful games and their profits to supposedly encourage the workers to find further success.

“This was a one-page list of the top 20 selling cartridges from the previous year, with their percent of sales. The purpose of the memo was the hint: 'These type of games are selling the best. Do more like these.' But this memo also showed us whose games did well, not just the game type. We noticed that four of the designers in a department of 30 were responsible for over 60% of the sales. And since we knew that Atari's cartridge sales for the prior year was $100 million, it was a shock to know that four guys making $30k per year made the company $60 million. That will get anyone thinking about a piece of the pie.”

- Activision co-founder, David Crane, in Game Developer

David Crane, Alan Miller, Larry Kaplan, and Bob Whitehead presented these statistics to then company president, Ray Kassar, to ask for further compensation considering all of the money they had made for the company. However, Kassar wouldn't budge. So, they left and founded the now famous game studio, Activision. The big emphasis for the young studio was the crediting of developers and transparency of the product. They found success very quickly, with Pitfall! being the most notable of the titles they had released for the Atari console. Activision became the first third party studio, and this led to the market being flooded with hundreds of games by companies attempting to emulate their success.

"They got two to three million dollars in VC funding (they found it very easy in light of our success), and hired programmers off the street. Without professional game designers, these companies developed low quality games that we would have deleted from our computers. With their VC money, however, they built millions of copies of these awful games. When we saw the poor quality of the games being developed, we shook our heads and said ‘These guys will all be out of business in a year.’ We just didn’t see what that would do to the business.”

-- Activision co-founder, David Crane, in Game Developer

Revenue then began to plummet and people slowly stopped buying games and consoles, leading to more supply than demand. Thus starting the crash in 1983. By 1985, the money gained by the industry fell to only $100 million, dropping 97%. Atari took one of the largest blows, losing around $536 million in the first year of the crash. This led to them laying off around 1,700 of their staff, many of which were in manufacturing, labor they opted to outsource instead.

Now this may seem sudden, as that's such a large chunk of the workforce to just let go so immediately. However, the Reagan era shifted a lot of company mindsets to focus more on the shareholder rather than the worker. In 1982, stock buybacks were legalized, which allowed a company to buy back their stock shares from the public. It's been considered a method of stock manipulation, as it results in the increase of share prices. The intention is to make the company look more profitable and prevent investors from jumping ship. However, this is also money diverted from the labor force. To maintain this practice, companies began looking for cheaper sources of labor and often their gaze was drawn overseas. Reagan popularized outsourcing.

In 1985, while many companies had shifted their focus to developing for the increasingly popular personal computer (PC), Nintendo had revived the home console market. They released the Nintendo Entertainment System (NES) in the United States in 1985. What made this console stand out was that Nintendo advertised it as an entertainment system rather than a home computer or a video game console. Retailers had a growing mistrust of video games at the time of the crash, considering the reduction in sales also affected them. Nintendo's approach was to reframe the console as a toy, even going so far as to create one of their now beloved toy robot characters, R.O.B., as an accessory to the console. The other reason it stuck out was that Nintendo had tightened their grip on what could and couldn't be released on the console. They had created the 10NES chip which was placed in cartridges and checked by the console to see if it was legitimate and could be played. They also placed a golden seal on officially licensed games to signify quality of product. This helped strengthen their pool of third party games and hindered the third party market from being flooded again.

While the industry was able to recover after a time, the effects it had on labor were felt for years. Many developers never came back after leaving the industry in the 80s. All of that talent, gone. The topic of unionization has become increasingly popular in recent years, but back then there wasn't much of a push for it. Like most bad things, we can blame Reagan for that. In 1981, the Professional Air Traffic Controllers Organization went on strike, demanding better working conditions for its members. However, Reagan replaced the striking workers rather than negotiate with them and the strike quickly ended. While the ordeal was short-lived, the impact was lasting. This set a precedent that the federal government was going to favor companies over unions at the negotiating table, leaving union memberships to decline in the following years.

Despite the video game industry being relatively new at the time, mass layoffs were done quite confidently both in and out of the industry. However, this didn't stop some of the laid off Atari employees from attempting to receive what they were owed. In 1986, Atari settled a lawsuit and agreed to pay 537 former employees that were laid off in 1983 more than $600k in backpay. This was one of the first times an employer, who gave no notice of a layoff, had to give backpay to the affected employees.

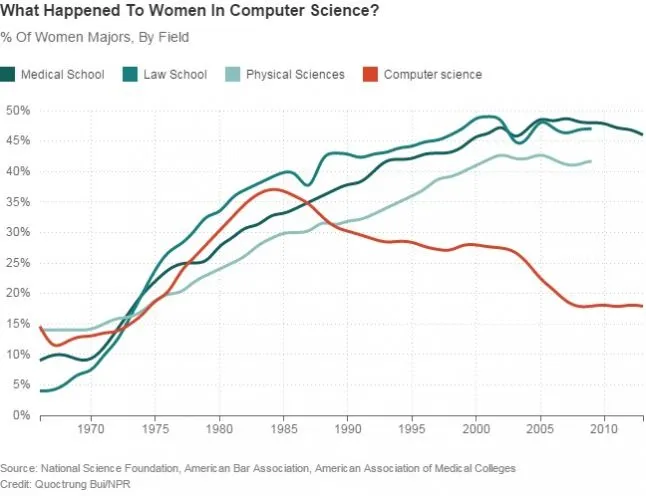

This was a win in a sort of revenge, "fuck you give us our money," way, but mass layoffs didn't slow down. It's also important to note who was most affected. Of course, I mentioned that manufacturing was one of the hardest hit sectors as companies favored outsourcing that labor, but what I mean in this case is the specific groups of people companies liked to target. Again, as I said previously, the rise of the PC came during the 1980s, however the marketing of it targeted males. While women for decades prior had been at the forefront of computer science (Margaret Hamilton, Katherine Johnson, Grace Hopper, and many more!), computer companies saw men and boys as more of a prime target market. NPR reported on this in 2014, digging into the experiences of women in the 80s who had wanted to pursue the vocation, but had to overcome obstacles. In college computer science courses, some women noted feeling behind on coursework as they had less experience with computers than the boys who had one at home. Ads would appeal to boys and men, having only male actors or sexualized women. It was a boys club, and video games were no different.

Atari was at the forefront of the crash, and it should come as no surprise that they had also been at the forefront of sexism in the workplace. Atari co-founder, Nolan Bushnell, had a history of taking meetings in hot tubs and code-naming projects after women in the office he thought were "stacked." He was a vile man and maintained an environment that felt unsafe for women. While the company was owned by Warner at the time of the crash, that didn't necessarily mean the company culture was wiped clean. Dona Bailey was a programmer at Atari shortly before the crash and is credited as the creator of one of its most popular games at the time, Centipede. However, when she was hired, she was the only woman out of the 30 programmers that worked there. When she left in 1982, the ratio went from 1 in 30 to 1 in 120. What led to her leaving? She was tired. After Centipede was made, the culture of hashing out ideas at Atari through crowding around a conference table or taking a weekend retreat allowed for the loudest voices in the room, these voices being male, overtaking her own. While there is no hard evidence as to the amount of women that left because of the culture fostered in the industry during this period, these stories aren't all too unfamiliar.

That brings us to now, a period in which the industry is once again struggling. It would be so easy for me to answer the question of, "How did this happen," with jabbing a finger at a portrait of Ronald Reagan, and honestly I wouldn't necessarily be wrong in doing so. A lot of the changes Reagan made during his tenure paved the way for... just a worse quality of life for the American worker. Whether it be stagnating wages, outsourcing, or union busting. All lines can trace back to that dreaded man.

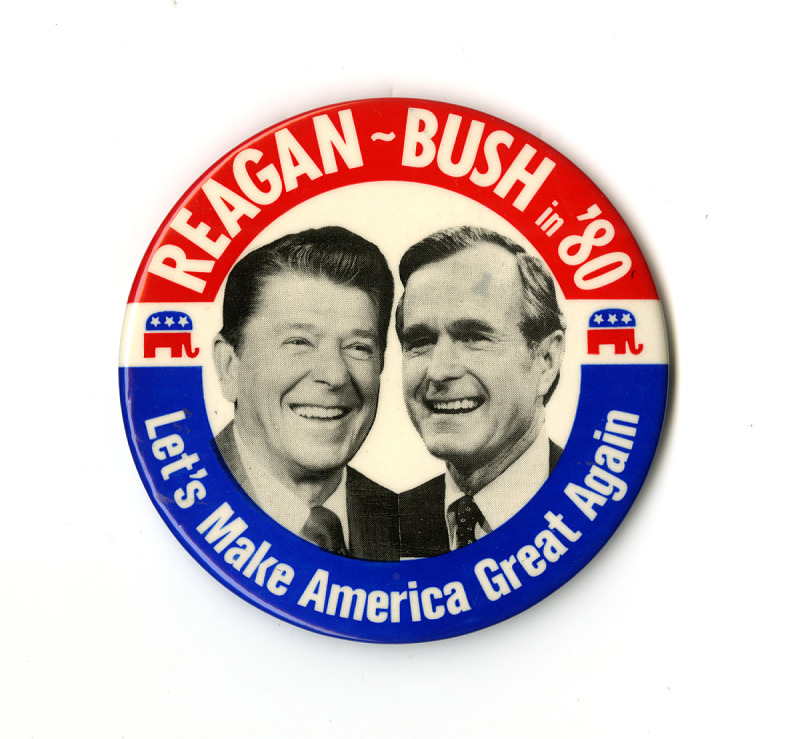

At the time of writing this article, Donald Trump is the sitting U.S. president. He was a wealthy television star with no experience holding public office prior to his first election win in 2016. He ran on cutting taxes, deregulation, and making America great again. If the resemblance to a certain other president isn't clicking, here is a real Ronald Reagan campaign button:

Layoffs are also still rampant. Since 2022, it's estimated that around 40,000 people have been laid off in the industry, with 1,100 estimated in 2026 alone and it's only February. However, the industry still makes billions of dollars. The biggest subject of blame tends to be the rise of generative artificial intelligence. AI has been used within game development for a while, however generative AI poses a very unique threat to creatives. It's essentially a plagiarizing tool that takes data from human-made works and frankensteins all of that together depending on the prompt it's given. Not to mention, data centers used for this technology can consume up to 5 million gallons of water per day. Companies have poured money into this technology under the guise that one day it will be good enough to replace labor, lower company costs, and appease shareholders.

Sexism is also still rampant in the industry. Most notably, in 2021 the California Civil Rights Department filed a lawsuit against Activision Blizzard for its history of sexual misconduct and discrimination within the workplace. Many of the stories that came out of this investigation are horrid and would make anyone with an ounce of empathy in their heart sick to their stomach. For those who wish to parse through the lawsuit, here is just one of many articles published concerning it. It's upsetting and angering, yet it's important to feel all of that because those are the exact emotions that fuel change. Also, remember that this was just 5 years ago! (The CEO of Blizzard at the time, Bobby Kotick, is also in the Epstein files. This feels important to mention.)

It feels grim, to be honest. Perhaps even worse than the crash of '83. I graduated with a Game Design degree in 2022, the year the layoffs began. I remained unemployed for about a year with the junior position pool drying up quickly. I took a job in IT. I hated it. I quit after a year and a half to try to break into games again, only to find the industry in a worse state than it was before. It became easier to feel hopeless. Even now, I feel that, despite how much I loathe the idea, IT will likely be my career path for now. "For now," being the load bearing phrase so many are hanging onto. While I do know people who have resolved to pursue other paths, so many are also simply trying to survive. Not just survival out of self-preservation, but survival in the sense of trying to make it all habitable again. Just last year, the labor union United Videogame Workers launched in partnership with Communications Workers of America. In the last few years, efforts to unionize in the industry have soared and, according to a CWA press release, workers at the Microsoft owned studio ID Software voted to unionize in December, 2025. Hardship fosters community and despite the late-stage capitalist hellscape we find ourselves in, collective action still exists.

If you're a game developer, I can't tell you whether or not to stay in the industry. Nor can I discern what the right answer is. What I can tell you is how I feel personally. The entire reason I run this blog or still work on games on the side is because of the devs I have spoken to and look up to. People who are all in the same boat, bailing out water coming through the holes drilled in by executives, AI, Reagan. I figured, why not grab a bucket too? It's easy to believe that my bucket won't make the difference but if all of us grabbed one, we can make enough progress to begin plugging the holes. Though admittedly, it's exhausting and hope isn't an easily maintained fuel source, but for me spite is. So, if hope doesn't work, then do it out of spite for all of the shitty people that put us in this situation. They'd hate to see us winning, and personally I think living my life via the mantra, "What would Reagan hate," is the most valid way to do it.

Edited by Marie Bogdanoff

For further information related to this topic, I recommend the following: